Home

Home

In 2021, No Time to Die marked the most recent chapter in the iconic James Bond franchise. In this nearly three-hour nail-biter, Daniel Craig stepped into the shoes of the world’s most famous spy for the final time, wrapping up a 15-year run across five films. More than five years later, the rumour mill is still in overdrive. Who will be the next name in the legendary lineup alongside Craig, Sean Connery, George Lazenby, Roger Moore, Timothy Dalton and Pierce Brosnan? There you go, a handy refresher for your next quiz night. Plenty of names are circulating, but for now, official confirmation remains under wraps.

At nocomputer, we do not have a concrete successor in mind either, and it is not really our place to appoint one. What we can do, however, is make a few suggestions. And when it comes to making those suggestions, we do it the way we know best: by putting technology to work creatively.

Partena Professional is a leading Belgian HR and payroll partner, supporting entrepreneurs, SMEs and large enterprises with everything from personnel management and payroll administration to social legal advice. Their people-first approach does not stop at their clients. Internally, they invest heavily in an engaged and connected company culture. Their annual staff party, Fiesta, is a perfect example of this mindset. During this large-scale event, colleagues from across the organisation come together to celebrate achievements, exchange experiences and, above all, enjoy themselves with good food and drinks.

The February 2026 edition was once again packed with inspiring conversations, engaging interviews and sharp keynotes that shaped a dynamic programme. All these different components needed to flow seamlessly into one another. That is exactly where nocomputer came in. Partena Professional had the bold idea of presenting fully AI-generated cinematic cutscenes/shortfilms throughout the event, all in true James Bond style.

The leading roles in these six short films were played by none other than:

And if we may say so ourselves, their acting debuts were more than convincing.

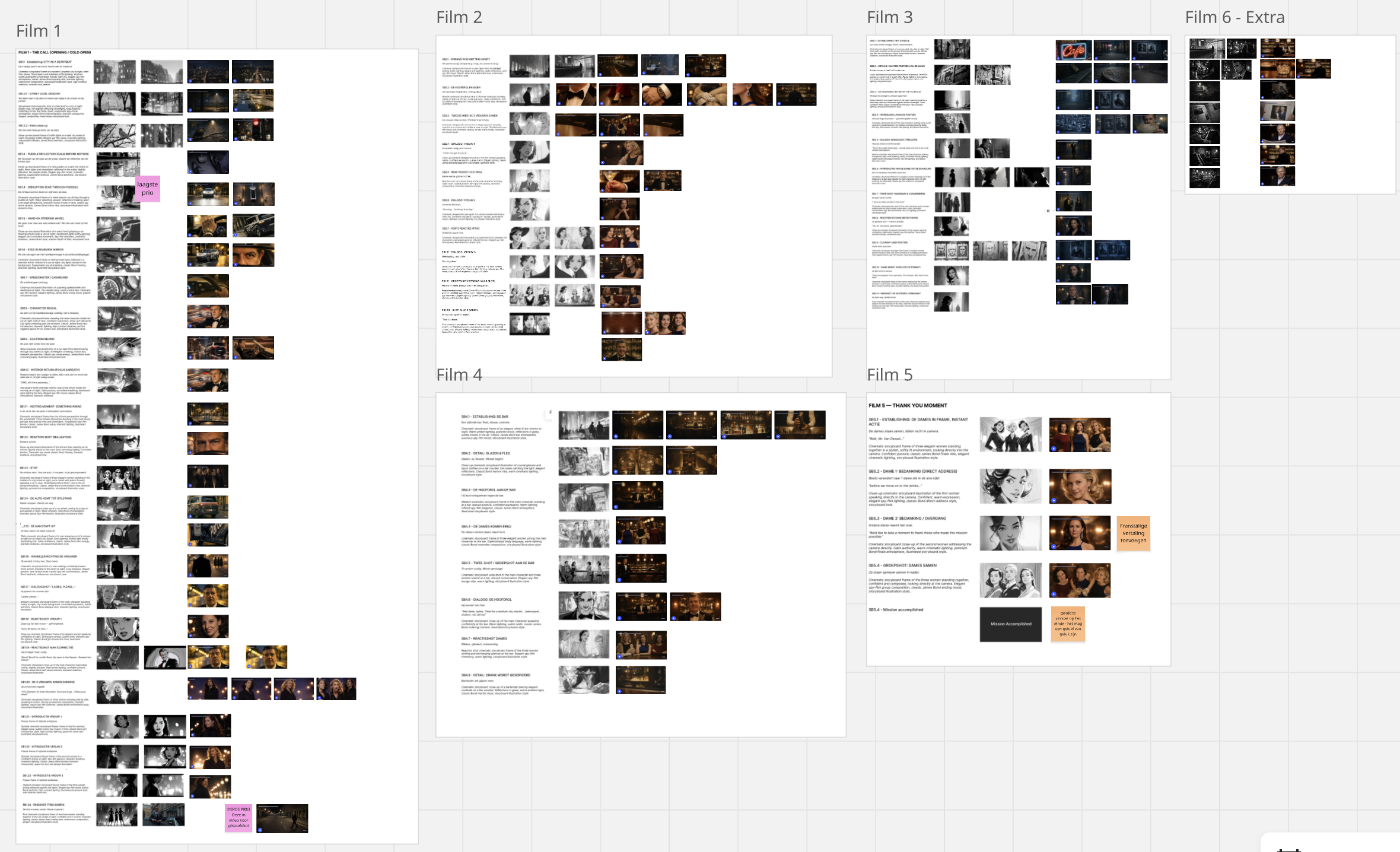

Directors don’t just start filming random people, places or events and hope to stitch a coherent storyline together afterwards. First comes the script. It’s written, rewritten and refined, with every detail carefully considered. We may not have been professional directors, but we did know better than to start from a blank page. Fortunately, we didn’t have to. Creative designer Alexander Debrabandere had already created illustrated visuals for every single scene of each short film. These detailed black-and-white storyboards set the tone, defined the composition and brought the characters to life, while mapping out much of the narrative well in advance.

The chosen look and feel was unmistakably inspired by the classic 1960s Bond films that catapulted the franchise to global fame. Seasoned spy movie aficionados would instantly spot the nods in the storyboard to timeless titles such as Dr. No, Goldfinger and You Only Live Twice.

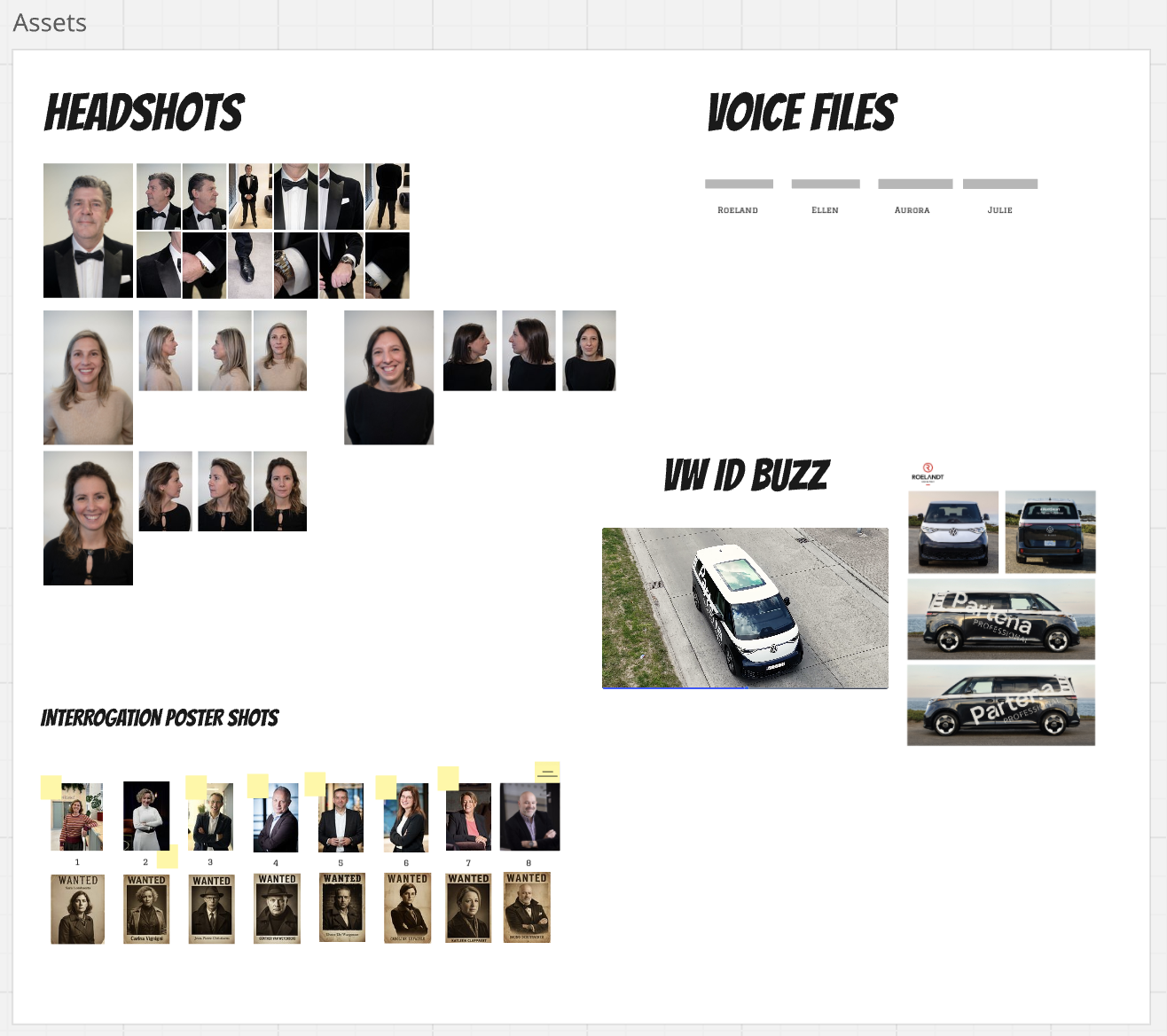

With the environment, atmosphere and overall vibe of the short films clearly defined, it was time to move on to character design. To capture them as accurately as possible on screen, we sent a videographer on site to shoot professional, high-quality footage of the main characters. Lead actor Roeland Van Dessel even posed in his own Bond suit for the occasion, the very outfit he would be wearing on the night of the event. It quickly became clear that consistency would be absolutely key.

We also shot close-ups of the three Bond girls, and created a ten-minute audio recording for each of the four characters. Those recordings would later serve as the foundation for voice cloning. To wrap things up, we received photos of a Volkswagen ID. Buzz branded with the Partena Professional logo, along with additional images of colleagues set to appear in specific scenes.

All these assets were collected in Miro, our central hub for collaboration and overview. Miro is a visual online workspace where teams can brainstorm, design and plan on an infinite digital canvas. Text, sticky notes, images and videos can all be structured and refined in one place. For this project, that kind of environment proved invaluable. It gave us a clear overview of every scene, visual and iteration, allowed us to share progress transparently with Partena Professional, and kept our internal collaboration structured and efficient.

With a tight deadline ahead, it was time to unleash our prompt experts.

Before generating the first visuals, two critical elements needed alignment with Partena Professional: the voices of the four lead characters and their wardrobe choices, not only for the three female leads, but for the protagonist himself.

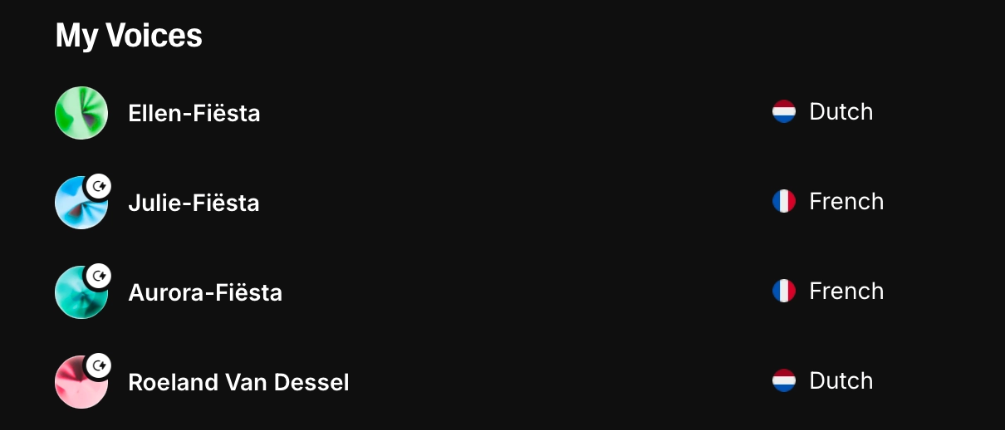

For voice cloning, we used ElevenLabs, AI software capable of analysing and synthetically recreating human speech. Using just a handful of snippets from the audio recordings, we were able to recreate the four main characters’ voices in no time.

Costuming also played a crucial role. As mentioned earlier, Roeland Van Dessel would be wearing the very suit featured in the films during the event itself. That made it absolutely crucial for every single detail to be spot on. In multiple of the short films, the three women appeared in an elegant bar setting inspired by the 1960s. To avoid visual inconsistencies, we deliberately selected three distinct and sophisticated outfits that clearly set them apart. Hairstyles were equally considered, ensuring each character had a recognisable and unique presence.

Once we received approval for both the voices and styling, we could finally begin generating!

In theory, we could have generated a video straight away using a detailed prompt, optionally supported by a storyboard illustration. In practice, we deliberately chose a different approach. We first created a still image and then used that as the starting frame for the video generation model. There were several strong reasons for this decision.

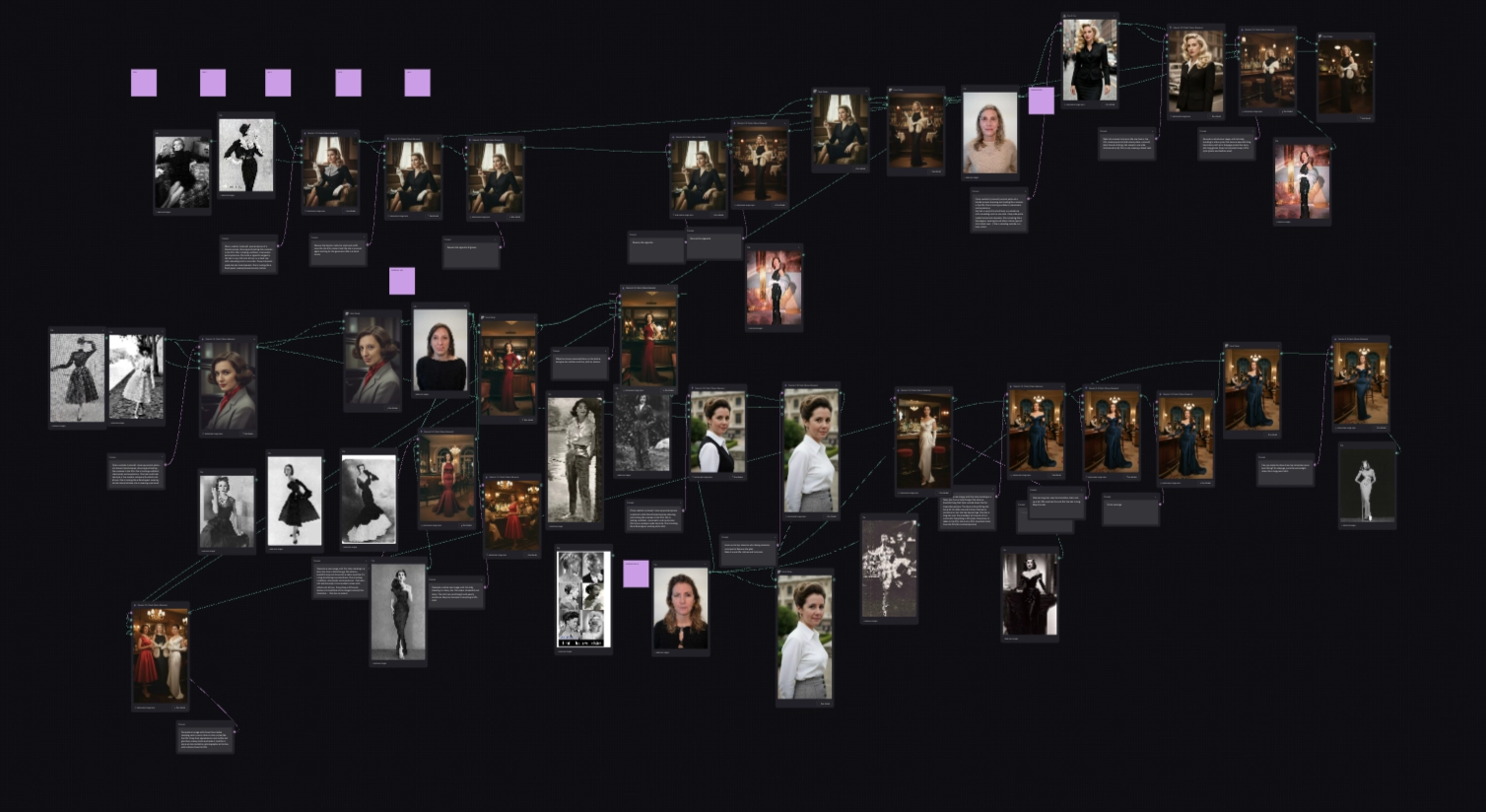

Our goal was to bring the storyboard visuals to life as accurately as possible. Given the advanced features of the software and the fact that multiple team members were working simultaneously, we chose Weavy as our central creative environment.

Weavy is a platform that enables designers, artists and teams to build, automate and scale complex workflows within a single environment, eliminating the need to constantly switch between separate tools. It combines generative AI models for image and video with professional editing capabilities such as masking, compositing, colour correction and image upscaling. It also operates using node-based workflows, where different elements and edits are connected visually on a canvas. This creates a clear, modular flow that can easily be shared with the team.

Over time, these flows can become quite impressive. Or intimidating, depending on your perspective.

This image generation-stage accounted for the bulk of the work. Once we were fully satisfied with an image (in a 16:9 ratio and at least 1080p resolution), we used it as the starting frame for video generation. That was the moment the project finally came to life.

To bring the carefully crafted images to life in video format, we turned to Flow, a standalone filmmaking tool developed by Google specifically for creatives. It seamlessly integrates a range of generative models, such as Veo for video, Imagen for image generation and Gemini for language, all within a single, user-friendly interface.

Flow proved intuitive and offered the perfect environment to keep the project moving at pace. For each video generation, we were able to fine-tune several parameters: resolution (with the option to upscale even more after downloading the video), the amount of generations, whether or not to generate audio, and more. Crucially, the tool allowed us to provide a starting frame to guide the video. In some cases, we also experimented with an end frame to prevent the model from introducing unexpected or completely random events. That said, the ultimate power still lies in the prompt itself. We didn’t just outline the actions that needed to take place; we specified camera angles, visual effects and other cinematic details. In essence, the prompt became a compact director’s brief, packed with all the elements the model needed to bring the scene to life.

Once a video was generated and approved in Flow, it followed one of two paths. Videos without speech were downloaded and uploaded to Miro and Google Drive. Videos with speech required an additional step. Flow didn’t allow us to modify voice audio directly, so we returned to ElevenLabs. In AI Voice Studio, we uploaded the video and replaced the voice in just a few clicks with the previously cloned version. After final approval, the video was exported and added to Miro and Google Drive.

When all scenes for a specific film were ready, the real editing began. That was when everything was fused into a true James Bond short film.

Our videographer then got to work, transforming the material into one cohesive and compelling film. This was far more than simple cutting and pasting.

The videographer was the first to see a complete version of the final result. He assessed whether everything flowed logically, whether visual inconsistencies remained, whether the pacing worked and whether additional atmospheric shots were required. In short, did this feel like a genuine cinematic short film worthy of a large-scale event? That first edit also marked the first internal feedback round for our prompt engineers.

After incorporating internal feedback, the completed versions were presented to Partena Professional. We felt that one feedback round per film would suffice. Thanks to our thorough preparation and the close collaboration with Partena Professional in the Miro board, the versions we presented were already nearly spot on. If there were any remarks, they were minor refinements at most. Occasionally, a word in a particular shot wasn’t entirely legible, a different camera angle worked better for a specific scene, or the French or Dutch translation of the English dialogue could use a slight polish.

Following these final refinements, the six short films were ready. Fully polished, Bond-inspired and prepared to surprise the Fiesta audience in style.

With a project of this scale, things don’t always go perfectly smoothly… and these six short films were no exception. Challenges popped up in different shapes and sizes, and in almost every stage of the process.

One of the biggest recurring headaches, both in image and video generation, was maintaining consistency. Often it came down to small details: earrings subtly (or not so subtly) changing, rims on the Volkswagen van suddenly looking a bit off, or background street lights shifting from one scene to the next. But sometimes the change wasn’t small at all: we also ran into cases where a character looked completely different in one image compared to another. On top of that, we were reminded just how sensitive prompts are: leave out a single word, or phrase something slightly differently, and the entire output could change dramatically.

When it came to stills specifically, we ran into a few recurring issues:

The videos had their own share of bumps along the way:

The rule of thumb throughout this project? Try again. And again. Tweak the prompt. Test a different model. Call the model your “friend” or “buddy” in the hope that it finally delivers exactly what you had in mind. Iterate until it clicks.

Every so often, the media lights up with outrage over yet another fully AI-generated ad campaign. Questions around authenticity, creativity and the role of AI pop up everywhere. For this particular event, however, there were clear and deliberate reasons for choosing artificial intelligence.

First and foremost, the event itself revolved around AI and technology. What better way to underline that theme than by weaving it into the very fabric of the evening? AI was not just the topic of conversation; it became the creative engine that tied the night together, showcasing the technology’s potential in an engaging and entertaining way.

Then there was the unmistakable 1960s James Bond aesthetic. Recreating that world authentically, from wardrobe and hairstyles to lighting, cars and set design, would have required serious production resources. With AI, we were able to explore and refine that styling far more flexibly. A perfectly lit retro bar, a close-up in classic Bond framing, a dramatic scene in a shadowy alley? AI made those creative leaps not only possible, but efficient.

And finally, practicality. In theory, we could have filmed everything the traditional way. In reality, we were working with a CEO and senior communication professionals, not trained actors with days to spare for rehearsals and multiple shooting schedules.

Projects like this are a reminder that we are very much in a transitional phase. Our project was barely a week old when an AI-generated video created with China’s SeeDance 2.0, featuring Tom Cruise and Brad Pitt in a fight scene, stirred up controversy in Hollywood. Models like these will keep emerging, and a shift is undoubtedly on the horizon. It is entirely possible that many of the challenges we encountered during this project will soon become far less prominent. At the same time, the dream or fear of fully automated, Hollywood-level filmmaking is unlikely to materialise overnight.

If anything, this project proved the opposite. Creating AI-driven films like these is not a matter of snapping your fingers. Behind every short video sat countless iterations, refined prompts, model switches, regenerated images and re-rendered sequences. It demanded creative vision, technical insight, aesthetic sensitivity and a healthy dose of trial and error. It may be old news, but it is worth repeating: AI is a powerful tool, yet it still requires direction, craftsmanship and critical thinking.

To put things into perspective: for six short films totalling less than five minutes of footage, we generated more than 350 videos. And that is just the video files. There is no telling how many initial and final frames were created along the way. What the audience saw at the event was the polished tip of a much larger creative iceberg.

Perhaps that is the most important takeaway. AI will not replace creativity, but it will reshape it. The technology challenges us to think differently, to experiment more boldly, and to merge storytelling and technology in ways that would have seemed far-fetched only a few years ago.

A successor to James Bond may still be pending. A new way of approaching storytelling, however, is already here. And at nocomputer, we are more than ready to help shape it.